2020-10-28

At Skyscrapers we manage multiple Kubernetes clusters for many customers. These clusters are based on our Kubernetes Reference Solution, a fully battle-tested and proven runtime platform for SaaS workloads.

Managing these clusters is not only about doing operations, but also about maintaining them: performing upgrades, roll-out new components and features, etc. And we’re not talking about just 1 cluster, but many and most of them running production workloads.

Our key principles require us to be scalable as an organisation while, at the same time, offering consistent high quality service. How to do that as a growing company? Well … at Skyscrapers, we invested quite a bit in automating a lot of this maintenance work.

In this article we’d like to share some of that experience with you.

At the very heart of it all we use Infrastructure as Code to package our solutions. Our technology of choice for years has been Terraform.

Focussing on the Kubernetes platform, we divide our Terraform into several “stacks”. Building further up on the modules concept, we define this as a full “deployable” unit. For more information about the concept you can consult our documentation.

At the minimum, for a full setup we have the following:

networking: Provides the VPC, generic subnets, NAT, route tables, etc.eks-cluster or aks-cluster: Sets up the basic AWS EKS or Azure AKS cluster and some related resources like auth providers, configuration of core kube-system components etc. For AKS the Node Groups are also defined here.eks-workers: This stack is EKS specific and instantiates one or many Kubernetes Node Groups (basically an AWS AutoScalingGroup). Thanks to new Terraform features in 0.12 and 0.13 we plan to merge this back into the cluster stack.addons: This one is the largest beast and responsible for the management of all addon features. This one deploys things like Ingress, our monitoring and logging stack, cert-manager, external-dns and so on.To provide a single source of truth to feed parameters into the several Terraform stacks, we define a single YAML which is fed into Terraform. An example of such file:

meta:

CLUSTER_NAME: production.eks.example.com

CLUSTER_TYPE: eks

RELEASE_MODEL: stable

tfvars:

common:

aws_region: us-east-1

teleport_token: ""

cluster:

k8s_base_domain: eks.example.com

enabled_cluster_log_types: ["audit"]

nodelocal_dns_enabled: true

workers:

- name: spotworkers

instance_type: m5a.xlarge

autoscaling: true

min_size: 3

max_size: 6

spot_price: 0.1

addons:

sla: production

kubernetes_dashboard_enabled: false

vertical_pod_autoscaler_enabled: trueNow that we have the basic building blocks with Terraform and the Definition File, it’s time to piece everything together. Our choice here was to leverage Concourse CI to both generate and run pipelines for each of our customers.

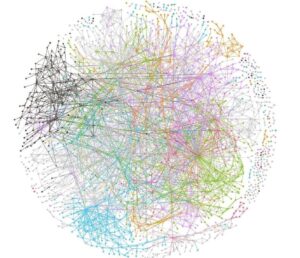

When I say we use Concourse to generate its own pipelines, I mean exactly that. One step further, you could say we do this Inception style with multiple layers:

pipeline-generator task that loops over all defined customers and generates a new customer-pipeline-generator job for each of our customers.customer-pipeline-generator in its turn then will generate specific pipelines for each of the enabled components, like Kubernetes, Teleport, Vault and so on.Since Concourse pipelines definitions are all written in YAML, we use spruce in our generators to piece all bits and pieces together.

A simple example:

find ./customers/ -name "*-meta.yaml" -exec sh -c 'spruce merge --prune meta customer-resources-part.yaml customer-jobs-part.yaml {} > {}.final' \;

spruce merge base-pipeline.yaml customers/*.final > $BASE/concourse-stacks-pipeline/pipeline.yamlAs an and result we get something like this:

Now, how do we get from commit to production deploy?

We use 2 channels in our process: insiders and stable.

The insiders channel just follows the master branch in our git repositories. Every commit to master, usually through small, reviewed PRs, triggers the pipeline for all insiders clusters. This is our own internal production cluster and sometimes some extra test clusters.

These changes then get tested and validated on the insiders cluster(s), via a combination of K8s conformance tests and manual validation.

Once we deem the change ready, we create a new GitHub “release” of our packages. As a result this will trigger all the pipelines following the stable channel.

As a plus, changes are most of the time safe to roll out, since we put them behind feature flags to be specifically enabled on a per customer/cluster basis.

Unfortunately, at this moment the actual deployment of new features, bug fixes. etc. still needs manual intervention: a human validates the planned changes for each cluster before running the deploy jobs.

This system has served us quite well, but our growing number of customers combined with a growing maturity in testing and releasing is making us look towards further automation from commit-to-production with as little human intervention as possible.

Some improvements we are already thinking about:

So there you have it, a small peak behind how we manage the many environments for our customers. I hope you enjoyed the read! 🙂

Want to hear more or share your best practises? Don’t hesitate to reach out!

Headerphoto by Johannes Plenio on Unsplash.